Avocad is useful when teams need fast campaign variants from product and brand inputs.

Comparison guide

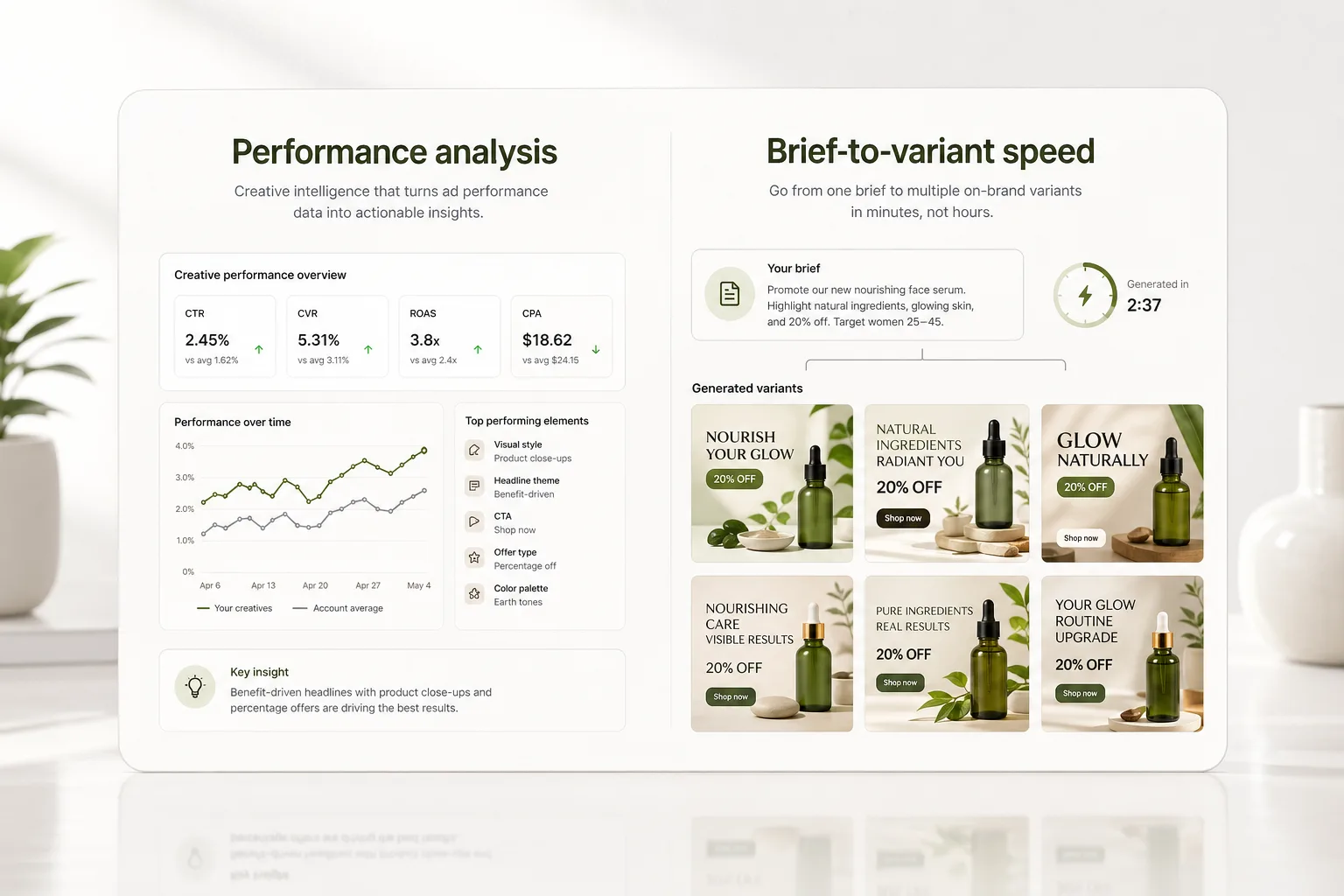

Avocad vs Pencil: creative intelligence or rapid creative production?

Pencil is known in the performance creative space. Avocad is designed for teams that want to quickly turn brand and product context into reviewable ad variants.

Creative intelligence and performance prediction should be evaluated against each team's own campaign data.

Human review remains required for copy, policy, visual accuracy, and brand fit.

Decision framework

How Avocad compares with Pencil

Compare how each workflow fits campaign briefs, creative review, and team handoff.

| Criterion | Pencil | Avocad | Review note |

|---|---|---|---|

| Primary workflow | Performance creative workflow and creative intelligence | Campaign-context generation for ads and product visuals | Compare based on the job your team repeats most often. |

| Inputs | Campaign and creative data depending on setup | URL, product or brand details, reference assets, and creative vision | Input quality strongly affects output quality. |

| Best evaluation method | Score against your campaign data and review needs | Score against brand fit, speed to useful variants, and edit effort | Do not rely on generic benchmark claims. |

| Target team | Performance marketing teams with existing campaign data | Growth, brand, and product teams needing fast creative production | Match the tool to your team structure and bottleneck. |

| Setup complexity | Requires campaign data integration for full value | Start from a URL and brand brief with minimal onboarding | Lighter setup is useful for teams testing tools quickly. |

| Product visuals | Focused on ad creative | Supports ad creative and product photoshoot workflows | Useful if product imagery production is also a bottleneck. |

When Pencil may fit

Evaluate Pencil if your team prioritizes creative intelligence workflows around existing campaign performance data.

When Avocad may fit

Evaluate Avocad if your team wants faster product and brand-context creative generation with lightweight setup.

Migration checklist

How to evaluate without risking campaign quality

- Define whether the bottleneck is insight, production, or review.

- Test both tools against one active campaign brief.

- Use the tool that improves your bottleneck without lowering review quality.

A practical evaluation workflow

Name the bottleneck

Separate insight problems from production problems before choosing software.

Run the same campaign brief

Use equal inputs and compare output quality, speed, and edit effort.

Launch only after review

Use human review to catch claim, policy, and brand issues before media spend.

Operator guide

Bottleneck-first tool evaluation

You should be able to evaluate whether your bottleneck is insight generation or creative production speed, and then pick the workflow that solves the real constraint.

Teams often buy for the wrong bottleneck. Start by naming whether your constraint is strategic insight, production throughput, or review capacity.

Step 1

Diagnose the current bottleneck

Quantify where work stalls: ideation, generation, review, or deployment. Your tool decision should target the largest recurring delay.

Deliverable

Bottleneck diagnosis worksheet

Watch out for

Choosing based on feature list rather than workflow friction.

Step 2

Run equivalent production tests

Use the same live campaign brief across both workflows and compare how quickly each produces review-ready variants with acceptable brand fit.

Deliverable

Equivalent-run benchmark

Watch out for

Testing with non-comparable sample briefs.

Step 3

Decide by operational leverage

Choose the workflow that reduces your dominant bottleneck while preserving quality controls. Lower friction plus stable QA usually wins long term.

Deliverable

Operational fit decision memo

Watch out for

Optimizing for novelty rather than repeatable output.

Common evaluation errors

Ignoring QA load after generation

Creative output volume rises but publishable volume does not.

Better move: Track review burden per usable variant.

Evaluating only one persona or offer type

Results do not generalize to your broader pipeline.

Better move: Test at least two audience/offer combinations.

Confusing predictive framing with production speed

The wrong metric drives the final tool decision.

Better move: Separate insight value from throughput value explicitly.

Frequently asked questions

Should I choose Avocad or Pencil?

Choose based on your bottleneck. If you need rapid creative production from brand and product context, test Avocad. If your workflow centers on creative intelligence, evaluate that separately.

Can Avocad use product context?

Yes. Avocad is built around using brand and product inputs to guide ad generation.

Does Pencil use AI for creative insights?

Pencil positions itself around creative intelligence. Evaluate its insights against your own campaign performance data before depending on them.

Which tool is faster from brief to output?

Avocad is designed for fast brief-to-variant production. Pencil may require more setup but offers different analytical capabilities.

Can I test both tools on the same campaign?

Yes. Running an identical brief through both tools and comparing output quality, edit effort, and brand fit is the most reliable evaluation method.

Related resources

Test Avocad on one campaign brief

See whether product-context generation reduces your ad creative production bottleneck.

Generate variants